From designing studies and testing prototypes to launching studies, and driving insights, kickstart your next user research project with this comprehensive guide to Hubble.

What is Hubble?

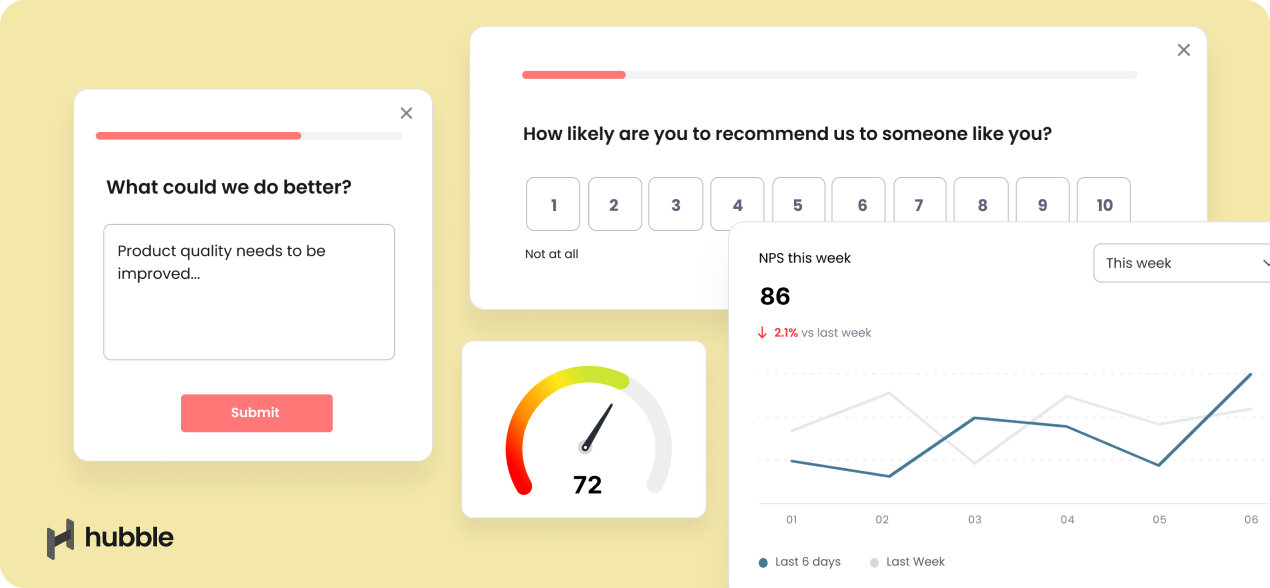

Hubble is a unified user research software for product teams to continuously collect feedback from users. Hubble offers a suite of tools including contextual in-product surveys, usability tests, prototype tests and and user segmentation to collect customer insights in all stages of product development.

Hubble allows product teams to drive decisions based on user-driven data with in-product surveys and unmoderated studies. You can build a lean UX research practice to test your design early, regularly hear from your users, and ultimately streamline the research protocol by recruiting participants, running studies, and analyzing data in a single tool.

Common use cases of Hubble

At a high level, Hubble offers two study types to begin with and can be customized:

- In-product surveys allow you to show survey modal pop-ups on your live websites to collect instant feedback from users.

- Unmoderated studies help you launch unmoderated studies including usability studies, prototype testing with Figma prototypes, card sorting, A/B testing, and many more.

Customizing each study type helps you address some of the typical use cases in product development cycle.

- Validating product ideas and concepts early in the design process

- Identifying potential usability issues with prototype testing

- Running A/B tests for informed design decision making

- Conducting usability studies to improve your product as you are iterating your product

- Collecting contextual user feedback realtime and gauging customer satisfaction of the product

When to use in-product surveys vs. unmoderated studies

Whether to run unmoderated studies or in-product surveys in your next user research depends on multiple factors including learning goals, the type of data you want, stage of the product life cycle, the context of your study, and more.

While there is no straightforward formula for when to use one method over the other, below is a quick reference on the pros and cons of each approach:

Pros and cons of surveys

Pros / Good for:

- Running summative studies, in which your product is generally available and need to evaluate how it’s doing.

- Getting contextual feedback by triggering surveys at specific flow or events happening in the product.

- Targeting specific audience and actual users of the product as surveys can be triggered to specific audience or events happening in the product.

- Collecting quantitative data for statistical significance for guiding your design decisions.

- Additionally recruiting users to participate in follow-up or unmoderated studies.

Cons / Not good for:

- Detailed, lengthy studies because surveys are shown as small modals or pop-ups on the side of the screen, making it difficult for testers to concentrate for too long.

- Early prototype testing as it is difficult to present the prototype. Unmoderated usability tests are suited for this.

Pros and cons of unmoderated studies

Pros / Good for:

- Iterative prototype testing by inserting Figma prototype links and relevant tasks for study objectives.

- Tasked-based tests in which you want to observe how users go through each task. The study result page highlights the different paths that users take to succeed or fail the tasks. To learn more, see making sense of prototype task results in an unmoderated study.

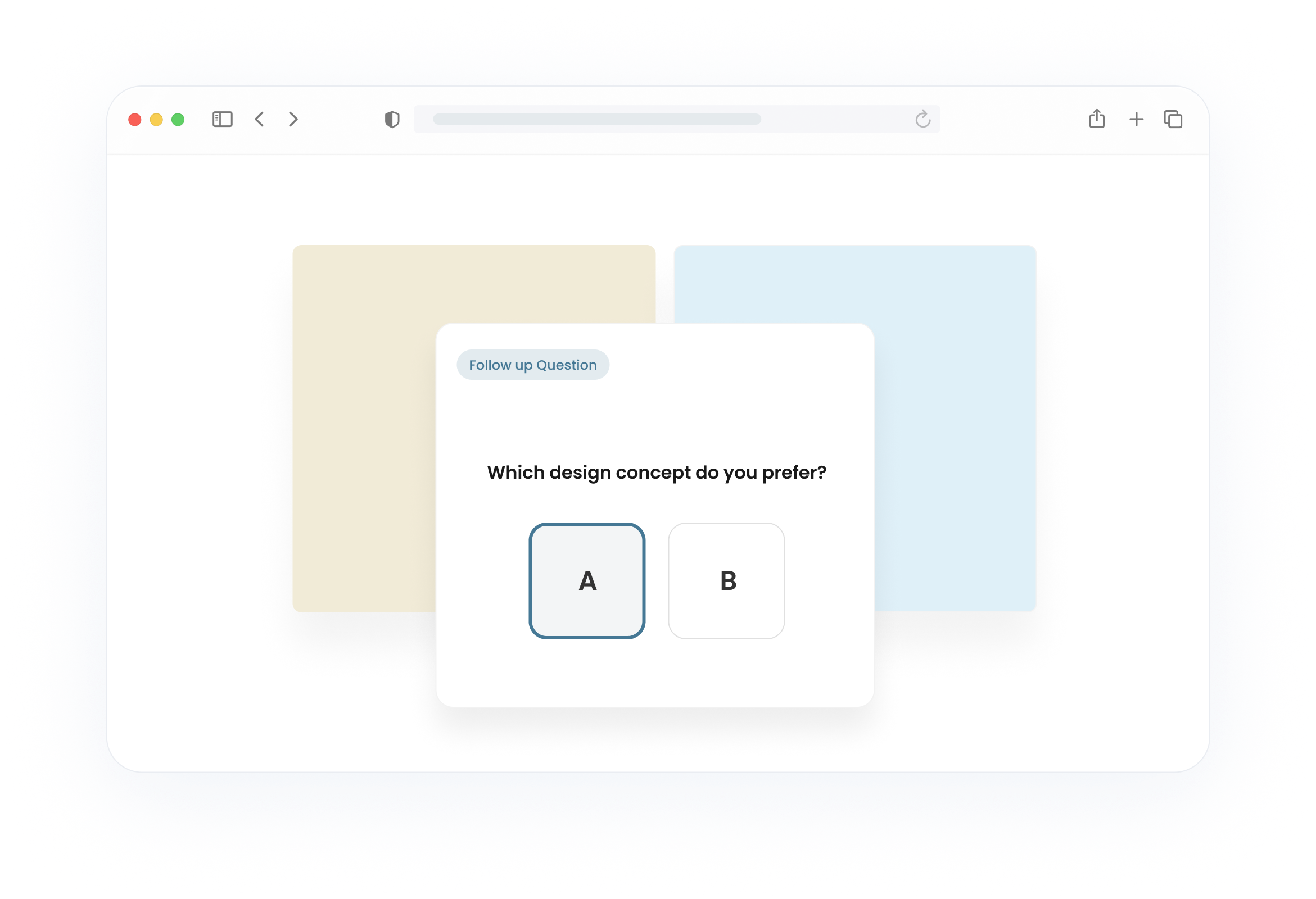

- A/B testing to gain feedback and preference when you have more than one design variations. Learn more about how to run A/B or split testing.

- Card sorting to understand users’ mental processes and help with information architecture. Learn more about running card sorting test.

- Collecting in-depth qualitative data. Unlike in-product surveys, the ample screen real estate in unmoderated studies lets participants to share better feedback.

Cons / Not good for:

- Not as effective as the in-product survey for collecting live website feedback and quantitative data.

Building and designing studies in Hubble

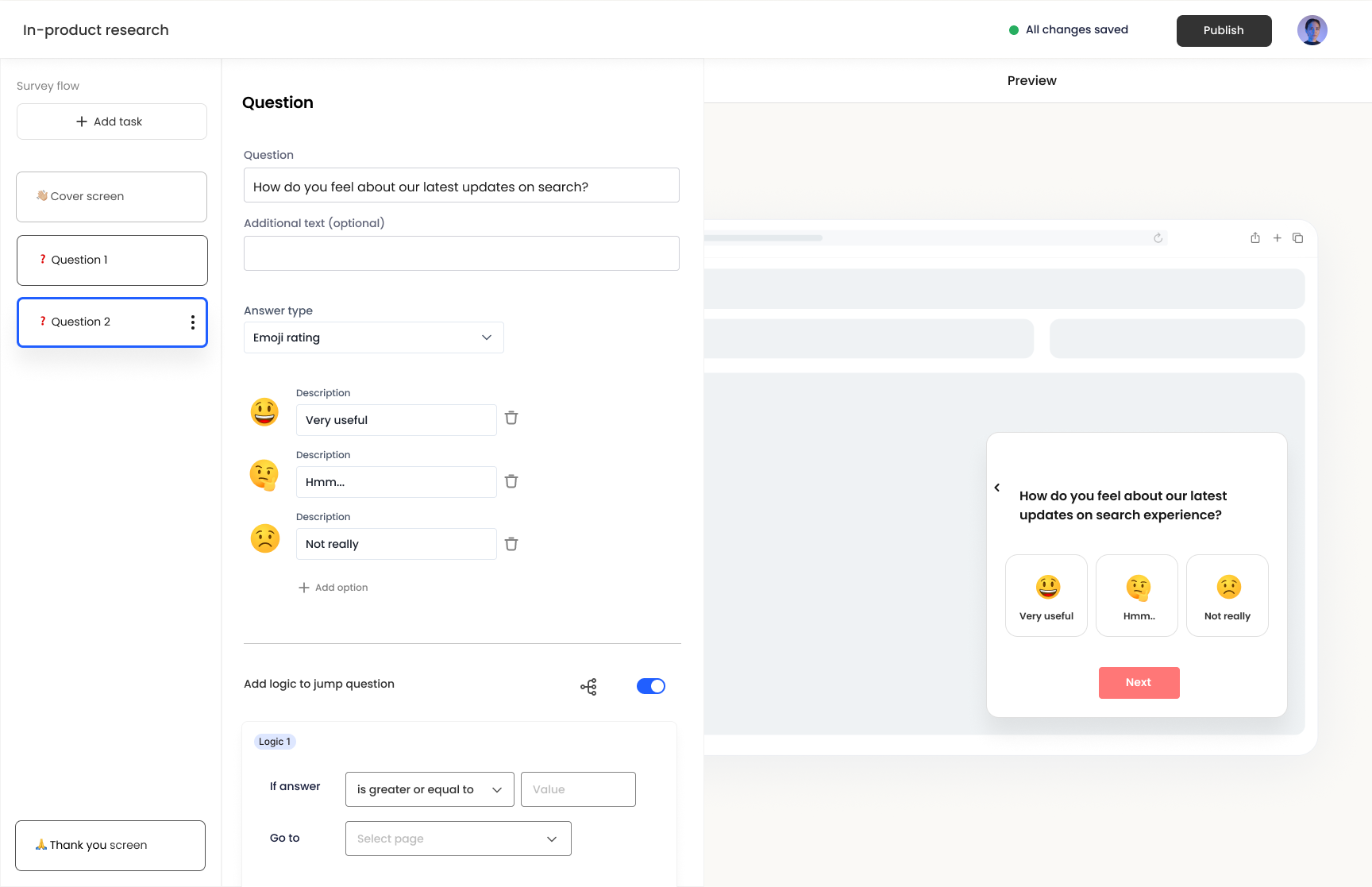

In Hubble, you can customize studies by using various question types for in-product surveys and unmoderated usability tests. On the very left column panel, you can manage the study flow by adding, removing, and reordering questions. Once a question is added, you can edit the details in the second column panel. As you build the study, the preview is displayed on the right half of the screen. Make sure to preview the study so that the study appears as you intend it to be.

Below are task options for unmoderated studies and in-product surveys:

- Text Statement (Unmoderated study): shows a page with text including title and paragraph. This form is useful for introduction and providing additional information or guidance.

- Prototype Task (Unmoderated study): Allows you to load Figma for prototype testing. On the page, you can set expected paths to inform Hubble which path is the expected happy path, considered a task success.

- Card Sort (Unmoderated study): Displays an interface for running card sort questions. Card sorting is especially helpful when understanding users’ mental model and information architecture.

- External Link: lets you insert external links into the study for users to click to. An example includes linking NDA document, product websites, or additional survey links.

- Net Promoter Score (NPS) (In-product survey): This displays a module specifically designed for running Net Promoter Score surveys. It auto-generates the numerical scale question and relevant questions to help kickstart the survey. Additionally, the results will be easy to interpret as the score and distribution are visualized.

The Question Task provides question forms that can be customized on your needs. Below are the options:

- Multi Answer Choices: Shows a number of options to be selected from. This option includes both single select and multi-select options

- Numerical Scale: provides a visual numerical scale for users to select. You can further customize the low and high value of the scale.

- Star Rating: shows a visual star rating option for users to respond to.

- Emoji Rating: is a simple and yet intuitive way to gauge emotional response from users. It provides the options to specify emojis and their descriptions.

- Open Text: provides a text field for users to respond to and elaborate on. This option is frequently used to follow-up to collect additional qualitative data.

- Yes or No Question [Unmoderated study only]: provides a closed yes/no question for users to select.

- Image Preference [Unmoderated study only]: allows you to upload multiple images and have users select from.

Types of unmoderated studies

Unmoderated testing is a method in user research in which participants independently interact with a product, service, or prototype without the presence of a researcher. Product teams are able to digitally build studies and capture participants’ screen and video recording for data analysis. Unmoderated studies are very often conducted in a remote environment, allowing participants to engage with the product in their own environment and at their own pace.

Prototype testing with Hubble

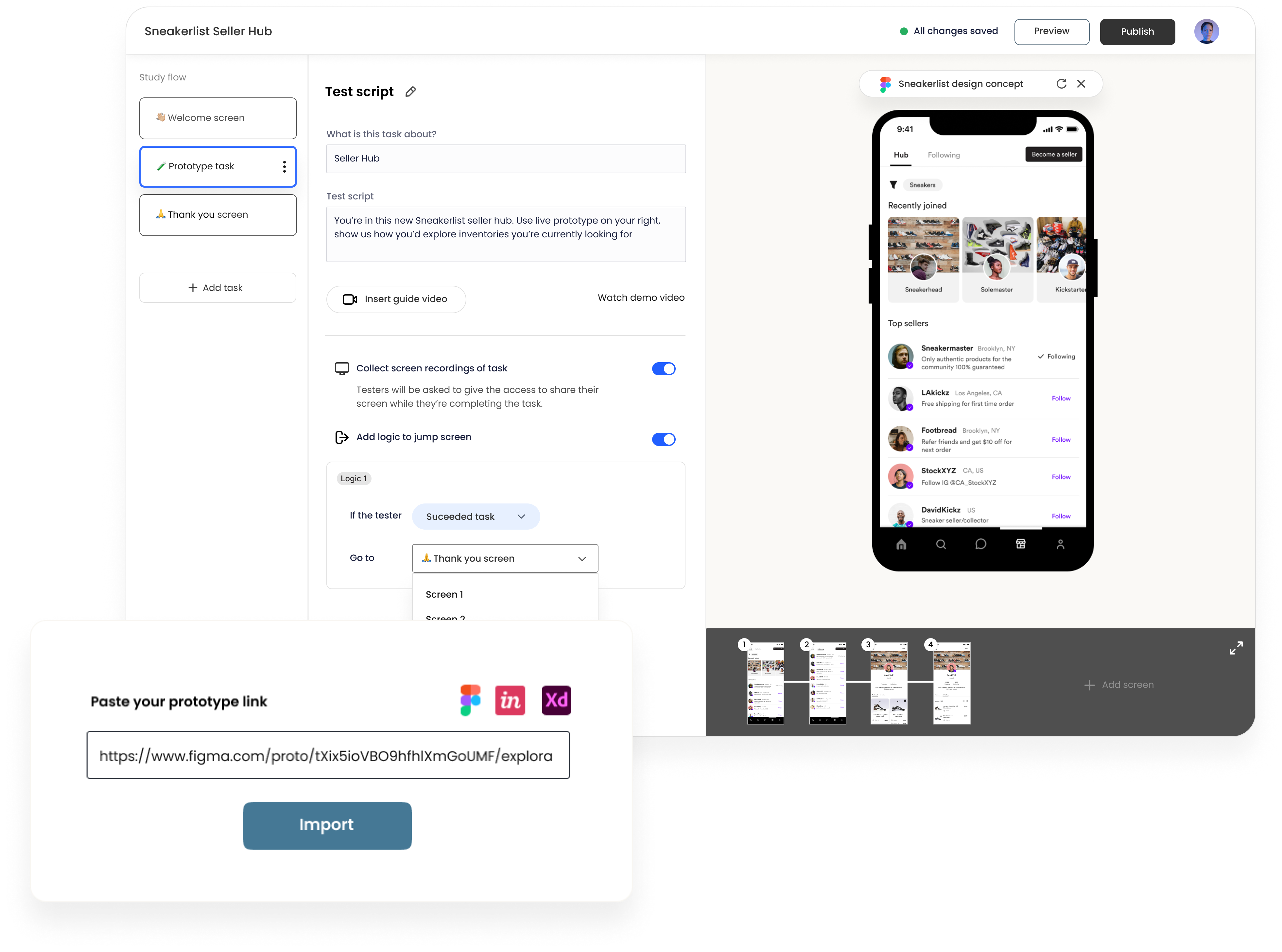

Hubble supports Figma to directly import prototypes for user testing. To learn more about step-by-step guide on importing prototypes into unmoderated studies, refer to this help article. To import a Figma prototype to your unmoderated study, you first need to add a Prototype Task to the study flow. In the rendered preview section of the screen will ask for a URL to your prototype. We encourage testing prototypes early on in the design process regardless of the fidelity of the prototype.

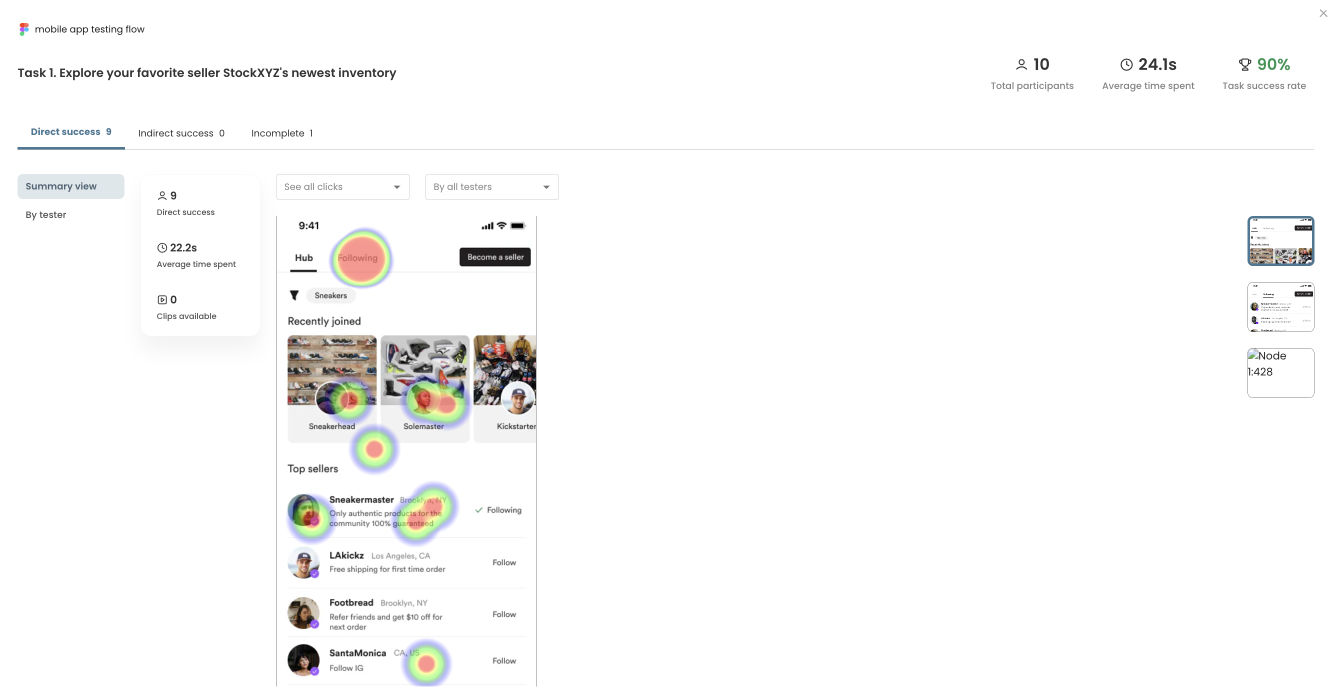

Building unmoderated tests, especially that involves Figma prototypes, requires creating relevant tasks, describing the context and scenario, and defining what actions or flow are considered as a task success. If you have the ideal happy path that you expect your users take, you can define the specific flow as an expected path. As participants interact with the prototype, their clicks and interactions will be recorded and be visualized in the results page as a heat map.

There are three categories in which tasks are defined. A task is considered success as long as the end screen matches the final destination set in the expected path.

- Direct success: is when a user follows the predefined expected path to complete a given task.

- Indirect success: is when a user completes a task by ending up in the desired end screen but through other paths that have not been defined in expected path.

- Incomplete: is when a user quits or determines the task is complete in a wrong end screen.

Conducting A/B testing or split testing

A/B testing is a research technique useful for comparing two or more versions of the design variations to evaluate how one performs relative to the other ones. In Hubble, you can setup A/B testing with unmoderated studies using the combination of prototype tasks and image preference questions.

For a step-by-step guide on designing A/B testing, refer to the How can I run A/B testing or split testing? The design involves essentially including two prototype designs in the study and asking relevant follow-up questions. Image preference question is a quick way to gauge preference.

Open and closed card sorting

Card sorting is a research method that helps uncover users’ mental models and preferences for organizing information. Card sorting provides insights into better structuring the content of your website as participants categorize a set of cards or topics into groups that make sense to them.

With Hubble, you can run both open and closed card sort studies by inserting Card sort task option. Once added, you can customize the card sort to add a number of card items, shuffle them, and decide whether to use a open or closed card sort.

For a more detailed step-by-step, see use card sorting to have testers categorize information.

Running first click tests

First Click Testing is a usability testing technique that focuses on the initial interaction users have with a website, application, or interface. It aims to evaluate the efficiency and intuitiveness of the design by honing in on the pivotal first click. This methodology is rooted in the understanding that the first interaction often sets the tone for the entire user experience.

According to research, "if the user's first click was correct, the chances of getting the overall scenario correct was .87." Making the correct first click affects the overall task success rate—Clicking on the right button led to an overall 87% task success rate. On the other hand, clicking on the incorrect button resulted in 46% task success rate.

In Hubble, the results page visualizes the participants’ interactions through heat maps. Hubble’s unmoderated study module will automatically generate heat maps and click filters to facilitate your data analysis process. With their first clicks and other subsequent clicks visualized as a heat map, you can identify potential design issues and make iterative improvements.

Best practices for unmoderated studies

Unmoderated studies have the flexibility to run studies at participants’ available schedule without the presence of moderators. As much as flexible and efficient a study can be, it requires deliberation when designing the study and outlining the instructions. Below are some tips and recommendations to consider. For detailed guide on unmoderated testing, refer to our Guide on Unmoderated Usability Tests, Prototype Tests and Concept Tests.

1. Personalize the study

Because participants are staring at their own screen and speaking to themselves, unmoderated studies can feel one-directional. Giving participants a sense of contribution and having them relate to the topic area encourage them to be more engaged.

In Hubble, we are always delivering features to make the unmoderated study experience to be pleasant. By inserting guided introduction videos, you can introduce yourself and the team, and the purpose of the study, and any additional details or instructions for participants.

2. Clarify what to expect in the study

Communicate the high level purpose of the study, how long the study will take, and what the general flow of the study would be like to let participants set expectations.

3. Use plain language and avoid jargons

Think about the audience that will be participating in the study. Especially avoid jargons that your product teams internally use. What is obvious to product teams that live and breath underneath the product area might not be as obvious to the participants.

4. Don’t give away too much details or instructions on the tasks

With prototype task descriptions, don’t give away too much details. Frame the structured tasks that focus on the end goal that the participants should ideally achieve. In other words, what is the job that your users should achieve with the specific flow or prototype.

5. Follow-up for the hows and whys

It is common for participants to answer questions without elaboration and quickly move on to the next. Because there is no moderator to converse and follow-up with questions in unmoderated studies, it is critical to always ask for why participants would give a certain feedback or rating.

6. Pilot test the study internally

Lastly, internally test out the study to make final changes and fix any potential misinterpretations that could happen. Have a second eye from the product team to review the flow and read through the instructions and tasks.

Designing in-product surveys for real-time feedback

Aside from traditional surveys, in-product surveys allow you to attach a survey modal to pop-up as real users engage with your product or website. This allows for collecting live, contextual feedback from users real-time as they are interacting with the product. A simple example is gauging customer experience after a transaction has been made.

Building in-product surveys is similar to designing unmoderated studies. The list of question options vary slightly, but essentially have similar type of question formats, including multi-answer choices, emoji rating, numerical scale, star rating, and open text. Please keep in mind that because the survey modal pops-up only on the portion of the entire screen, the length of text can be limited.

See our step-by-step guide on launching in-product surveys in this article.

What makes in-product surveys powerful is that you can target specific audience of users real-time by specifying the event or actions that users take. The configuration is available when you publish the survey, providing four trigger options.

- Page URL: You can provide a URL of a specific page where you want the survey to be prompted. Note that you can add as many URLs for a single survey.

- CSS selector: You can prompt a survey when a user interacts with a specific element within a page. For example, you can select a certain div block or CTA button to trigger the survey.

- Event: You can prompt a survey when a predefined event is triggered. Events can be flexibly defined as long as it is properly setup, but would require adding event tracking code Hubble.track(’eventName') in the source code.

- Manually via SDK API: You can prompt surveys manually displayed through the installed Hubble’s Javascript SDK. Inserting window.Hubble.show(id) with the survey ID in your source code will display the survey in the specified page or timing.

To learn more about survey triggers and publishing surveys, refer to the article.

Net Promoter Score (NPS) surveys

Net Promoter Score (NPS) is a simple yet powerful tool for gauging customer loyalty and identifying opportunities for improvement. In survey projects, you can simply add NPS option to setup a NPS study, which will open a predefined template.

For step-by-step guide on setting up NPS survey, refer to the article How to setup Net Promoter Score (NPS) survey.

In the results page of the project, the score is automatically calculated by identifying the ratio of promoters, passives, and detractors along with the score distribution. For making better sense of NPS results, we have a dedicated article Making sense of Net Promoter Score (NPS).

Collaborating in Hubble

As much as collaboration is important when conducting a user research study, inviting collaborators and team members is made easy in Hubble. Bringing in collaborators increases visibility of ongoing projects and boost teamwork. There are several ways to make sure your team stays synchronous throughout the project:

Inviting team members to workspace

Once you are logged in to your workspace, you can add collaborators by simply clicking + Invite Collaborators option available on the top right corner of the page. You can manage your team members by going into the account page.

Preview studies and sharing published studies

Even if you have team members that are not included in the workspace, you can still share the progress of the study by sharing the preview version of the study with the study URL.

Once a survey or unmoderated study is published, the public link is available in the Share tab of the project page. You can use the URL to distribute the survey to participants or your team members.

Exporting study results

As study responses are submitted, the data will become immediately available. The Summary tab displays a summarized view of all the responses that are submitted. Under responses tab, you can review individual responses that are submitted with a table view. To examine more closely, you can export the table to view in different tool or to share with stakeholders.

Making the most out of Hubble

Below outlines a few approaches to maximize the use of Hubble for your research efforts:

1. Establish lean research practice by running small, iterative studies:

User research and collecting product feedback are not just a one time thing. Just as a product needs to be constantly developed and evolved to suit user needs, user research should be a continuous effort, closely involving users in the product development.

With in-product surveys and unmoderated studies, Hubble allows you to easily setup, design, and launch studies. Running iterative studies in small scale allows flexibility for failures as you can diagnose what works and what doesn’t early on in the development cycle. Moreover, if you are running usability studies, more than 80% of the usability problems are found with the first five participants. There is a significant diminishing return for identifying usability problems with additional participants. Instead of running an elaborate study with 10+ participants, it is highly recommended to run a series of studies with design iterations and small number of participants.

- For integrating Jobs-to-be-Done Framework, see our overview guide.

- To learn more about key usability metrics to track, see our comprehensive guide.

- For more on evangelizing research at your organization, see our guide Establishing your UX research strategy.

2. Engage with real users through in-product surveys to collect contextual feedback:

Collecting real, contextual feedback can be challenging especially when scheduling participants for studies can take weeks only to have them retrospect and rely on memory to share their experience using a certain product.

With in-product surveys, you can immediately follow-up with users for feedback by triggering the survey modal with specific events or flows that users go through. This approach not only helps you evaluate how the current product is doing, but you can also maintain user engagement and satisfaction by demonstrating that you value user input and are actively seeking ways to improve the product based on their feedback.

3. Use a combination of in-product surveys and unmoderated studies for a full-on study:

While in-product surveys are useful for collecting quick contextual feedback from actual users, it can be difficult to gain in-depth feedback with the limited space provided in the survey modals. Alternatively, you can redirect the users to participate in unmoderated studies using an external link. Using a combination of both helps you maintain the studies fragmented and focused on specific topics, which can also help avoid potential respondent fatigue.

For example, if a study is about evaluating the current purchasing experience in a website, you can have an in-product survey to share users’ overall experience, and then follow-up with a link to unmoderated studies to conduct prototype testing or A/B testing for new design improvements.

4. Streamline research protocol from recruiting, designing experiments, running and analyzing data with Hubble:

Depending on the industry or type of target users, it could be challenging to get hold of actual users or panel of participants to reach out. To help streamline research protocol, Hubble helps you find the right pool of participants within a short time turnaround. Along with in-product surveys and variety of tests you can run with unmoderated studies, you can have a better understanding of your users. While keeping the studies small but repeated, Hubble is your continuous user experience engine that help you effectively scale as needed.

FAQs

Hubble is a unified user research software for product teams to continuously collect feedback from users. Hubble offers a suite of tools including contextual in-product surveys, usability tests, prototype tests and and user segmentation to collect customer insights in all stages of product development.

At a high level, Hubble offers in-product surveys and unmoderated studies. You can easily customize and design the study using our pre-built templates.

Some common research methods are concept and prototype testing, usability testing, card sorting, A/B split testing, first click and 5-seconds test, Net Promoter Score surveys, and more.

Hubble allows product teams to make data-driven decisions with in-product surveys and unmoderated studies. You can build a lean UX research practice to test your design early, regularly hear from your users, and ultimately streamline the research protocol by recruiting participants, running studies, and analyzing data in a single tool.

Hubble’s unmoderated study option allows you to connect Figma prototypes to its interface to easily load and design studies. Once your study is loaded, you can create follow-up tasks and relevant questions.

To learn more, see the guide section.